Abstract

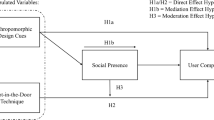

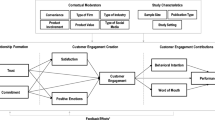

Although chatbots are increasingly deployed in customer service to reduce the burden of human labor and sometimes replace human employees in online shopping, there remains the challenge of ensuring consumers’ service evaluation and purchase decisions after chatbot service. Anthropomorphism, referring to human-like traits exhibited by non-human entities, is considered a key principle to facilitate customers’ positive evaluation of chatbot service and purchase decisions. However, equipping chatbots with anthropomorphism should be planned and rolled out cautiously because it could be both advantages to building customer trust and disadvantages for increasing customer overload. To understand how customers process and react to chatbot anthropomorphism, this study applied Wixom and Todd’s model and social information processing theory which guide this study to examine how object-based social beliefs (i.e., chatbot warmth and chatbot competence) of anthropomorphic chatbot influence service evaluation and customer purchase by generating behavioral beliefs (i.e., trust in chatbot and chatbot overload). The research model was examined with a “lab–in–the–field” experiment of 212 samples and two scenario-based experiments of 124 samples and 232 samples. The results showed that chatbot warmth and competence had significant effects on trust in chatbot and chatbot overload. Trust in chatbot and chatbot overload further significantly impact service evaluation and then customer purchase. Implications for theory and practice are discussed.

Similar content being viewed by others

Notes

Taobao (https://www.taobao.com/) features designing experimental conditions, i.e., manipulating chatbots cues.

Tencent Meeting (https://meeting.tencent.com/) features communicating screen sharing and screen recording.

Warmth_1 refers to chatbot with verbal warm cues, warmth_2 refers to chatbot with verbal and non-verbal warm cues.

References

Ahmad, R., Siemon, D., Gnewuch, U., & Robra-Bissantz, S. (2022). Designing personality-adaptive conversational agents for mental health care. Information Systems Frontiers, 24(3), 923–943. https://doi.org/10.1007/s10796-022-10254-9

Ajzen, I. (1991). The theory of planned behavior. Organizational Behavior and Human Decision Processes, 50(2), 179–211.

Araujo, T. (2018). Living up to the chatbot hype: The influence of anthropomorphic design cues and communicative agency framing on conversational agent and company perceptions. Computers in Human Behavior, 85, 183–189. https://doi.org/10.1016/j.chb.2018.03.051

Behera, R. K., Bala, P. K., & Ray, A. (2021). Cognitive chatbot for personalised contextual customer service: Behind the scene and beyond the hype. Information Systems Frontiers, 1–22. https://doi.org/10.1007/s10796-021-10168-y

Blut, M., Wang, C., Wünderlich, N. V., & Brock, C. (2021). Understanding anthropomorphism in service provision: A meta-analysis of physical robots, chatbots, and other AI. Journal of the Academy of Marketing Science, 49(4), 632–658. https://doi.org/10.1007/s11747-020-00762-y

Cenfetelli, & Bassellier. (2009). Interpretation of formative measurement in information systems research. MIS Quarterly, 33(4), 689. https://doi.org/10.2307/20650323

Chan, E., & Ybarra, O. (2002). Interaction goals and social information processing: Underestimating one’s partners but overestimating one’s opponents. Social Cognition, 20(5), 409–439. https://doi.org/10.1521/soco.20.5.409.21126

Chen, X., Wei, S., & Rice, R. E. (2020). Integrating the bright and dark sides of communication visibility for knowledge management and creativity: The moderating role of regulatory focus. Computers in Human Behavior, 111, 106421. https://doi.org/10.1016/j.chb.2020.106421

Cheng, X., Bao, Y., Zarifis, A., Gong, W., & Mou, J. (2022a). Exploring consumers’ response to text-based chatbots in e-commerce: The moderating role of task complexity and chatbot disclosure. Internet Research, 32(2), 496–517. https://doi.org/10.1108/INTR-08-2020-0460

Cheng, X., Zhang, X., Cohen, J., & Mou, J. (2022b). Human vs. AI: Understanding the impact of anthropomorphism on consumer response to chatbots from the perspective of trust and relationship norms. Information Processing & Management, 59(3), 102940. https://doi.org/10.1016/j.ipm.2022.102940

Chien, S.-Y., Lin, Y.-L., & Chang, B.-F. (2022). The effects of intimacy and proactivity on trust in human-humanoid robot interaction. Information Systems Frontiers. https://doi.org/10.1007/s10796-022-10324-y

Cohen, J. (1988). Set correlation and contingency tables. Applied Psychological Measurement, 12(4), 425–434. https://doi.org/10.1177/014662168801200410

Crolic, C., Thomaz, F., Hadi, R., & Stephen, A. T. (2022). Blame the bot: Anthropomorphism and anger in customer–chatbot interactions. Journal of Marketing, 86(1), 132–148. https://doi.org/10.1177/00222429211045687

Davis, J. M., & Agrawal, D. (2018). Understanding the role of interpersonal identification in online review evaluation: An information processing perspective. International Journal of Information Management, 38(1), 140–149. https://doi.org/10.1016/j.ijinfomgt.2017.08.001

Ehrke, F., Bruckmüller, S., & Steffens, M. C. (2020). A double-edged sword: How social diversity affects trust in representatives via perceived competence and warmth. European Journal of Social Psychology, 50, 1540–1554. https://doi.org/10.1002/ejsp.2709

Epley, N., Waytz, A., & Cacioppo, J. T. (2007). On seeing human: A three-factor theory of anthropomorphism. Psychological Review, 114(4), 864–886. https://doi.org/10.1037/0033-295X.114.4.864

Faul, F., Erdfelder, E., Lang, A.-G., & Buchner, A. (2007). G*Power 3: A flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behavior Research Methods, 39(2), 175–191. https://doi.org/10.3758/BF03193146

Fiske, S. T., Cuddy, A. J. C., Glick, P., & Xu, J. (2002). A model of (often mixed) stereotype content: Competence and warmth respectively follow from perceived status and competition. Journal of Personality and Social Psychology, 82(6), 878–902. https://doi.org/10.1037/0022-3514.82.6.878

Fornell, C., & Larcker, D. F. (1981). Structural equation models with unobservable variables and measurement error: Algebra and statistics. Journal of Marketing Research, 18(3), 382. https://doi.org/10.2307/3150980

Fu, S., Li, H., Liu, Y., Pirkkalainen, H., & Salo, M. (2020). Social media overload, exhaustion, and use discontinuance: Examining the effects of information overload, system feature overload, and social overload. Information Processing & Management, 57(6), 102307. https://doi.org/10.1016/j.ipm.2020.102307

George, D., & Mallery, P. (2019). IBM SPSS statistics 26 step by step: A simple guide and reference. Routledge. https://doi.org/10.4324/9780429056765

Go, E., & Sundar, S. S. (2019). Humanizing chatbots: The effects of visual, identity and conversational cues on humanness perceptions. Computers in Human Behavior, 97, 304–316. https://doi.org/10.1016/j.chb.2019.01.020

Gong, T., Choi, J. N., & Samantha, M. (2016). Does customer value creation behavior drive customer well-being? Social Behavior and Personality, 44(1), 59–75. https://doi.org/10.2224/sbp.2016.44.1.59

Hair, J. F., Black, W. C., Babin, B. J., Anderson, R. E., & Tatham, R. L. (2006). Multivariate data analysis (6th ed.). Prentice-Hall International Inc. http://www.sciepub.com/reference/219114. Accessed 10 Oct 2022.

Hair, J. F., Jr., Hult, G. T. M., Ringle, C. M., & Sarstedt, M. (2021). A primer on partial least squares structural equation modeling (PLS-SEM). Sage publications.

Han, E., Yin, D., & Zhang, H. (2022). Bots with feelings: Should AI agents express positive emotion in customer service? Information Systems Research, 1–16. https://doi.org/10.1287/isre.2022.1179

Hayes, A. F., & Scharkow, M. (2013). The relative trustworthiness of inferential tests of the indirect effect in statistical mediation analysis: Does method really matter? Psychological Science, 24(10), 1918–1927. https://doi.org/10.1177/0956797613480187

Hendriks, F., Ou, C. X. J., Khodabandeh Amiri, A., & Bockting, S. (2020). The power of computer-mediated communication theories in explaining the effect of chatbot introduction on user experience. Hawaii International Conference on System Sciences. https://doi.org/10.24251/HICSS.2020.034

Hildebrand, C., & Bergner, A. (2021). Conversational robo advisors as surrogates of trust: Onboarding experience, firm perception, and consumer financial decision making. Journal of the Academy of Marketing Science, 49(4), 659–676. https://doi.org/10.1007/s11747-020-00753-z

Jarvenpaa, S. L., & Leidner, D. E. (1999). Communication and trust in global virtual teams. Organization Science, 10(6), 791–815.

Jiang, Y. (2023). Make chatbots more adaptive: Dual pathways linking human-like cues and tailored response to trust in interactions with chatbots. Computers in Human Behavior, 138, 107485. https://doi.org/10.1016/j.chb.2022.107485

Keh, H. T., & Sun, J. (2018). The differential effects of online peer review and expert review on service evaluations: The roles of confidence and information convergence. Journal of Service Research, 21(4), 474–489. https://doi.org/10.1177/1094670518779456

Kim, G., Shin, B., & Lee, H. G. (2009). Understanding dynamics between initial trust and usage intentions of mobile banking. Information Systems Journal, 19(3), 283–311. https://doi.org/10.1111/j.1365-2575.2007.00269.x

Kim, J. H., Kim, M., Kwak, D. W., & Lee, S. (2022). Home-tutoring services assisted with technology: Investigating the role of artificial intelligence using a randomized field experiment. Journal of Marketing Research, 59(1), 79–96. https://doi.org/10.1177/00222437211050351

Kumar, V., Rajan, B., Salunkhe, U., & Joag, S. G. (2022). Relating the dark side of new-age technologies and customer technostress. Psychology & Marketing, 39(12), 2240–2259. https://doi.org/10.1002/mar.21738

Kyung, N., & Kwon, H. E. (2022). Rationally trust, but emotionally? The roles of cognitive and affective trust in laypeople’s acceptance of AI for preventive care operations. Production and Operations Management, 1–20. https://doi.org/10.1111/poms.13785

Leong, L.-Y., Hew, T.-S., Ooi, K.-B., Metri, B., & Dwivedi, Y. K. (2022). Extending the theory of planned behavior in the social commerce context: A Meta-Analytic SEM (MASEM) Approach. Information Systems Frontiers. https://doi.org/10.1007/s10796-022-10337-7

Lewis, B. R., Templeton, G., & Byrd, T. (2005). A methodology for construct development in MIS research. European Journal of Information Systems, 14(4), 388–400. https://doi.org/10.1057/palgrave.ejis.3000552

Li, L., Lee, K. Y., Emokpae, E., & Yang, S.-B. (2021). What makes you continuously use chatbot services? Evidence from chinese online travel agencies. Electronic Markets, 31(3), 575–599. https://doi.org/10.1007/s12525-020-00454-z

Lou, C., Kang, H., & Tse, C. H. (2022). Bots vs. humans: how schema congruity, contingency-based interactivity, and sympathy influence consumer perceptions and patronage intentions. International Journal of Advertising, 41(4), 655–684. https://doi.org/10.1080/02650487.2021.1951510

Lowry, P. B., & Gaskin, J. (2014). Partial least squares (pls) structural equation modeling (sem) for building and testing behavioral causal theory: When to choose it and how to use it. IEEE Transactions on Professional Communication, 57(2), 123–146. https://doi.org/10.1109/TPC.2014.2312452

Lu, J., Zhang, Z., & Jia, M. (2019). Does servant leadership affect employees’ emotional labor? A social information-processing perspective. Journal of Business Ethics, 159(2), 507–518. https://doi.org/10.1007/s10551-018-3816-3

Luo, X., Qin, M. S., Fang, Z., & Qu, Z. (2021). Artificial intelligence coaches for sales agents: Caveats and solutions. Journal of Marketing, 85(2), 14–32. https://doi.org/10.1177/0022242920956676

Mason, C. H., & Perreault, W. D. (1991). Collinearity, power, and interpretation of multiple regression analysis. Journal of Marketing Research, 13. https://doi.org/10.1177/002224379102800302

Mostafa, R. B., & Kasamani, T. (2022). Antecedents and consequences of chatbot initial trust. European Journal of Marketing, 56(6), 1748–1771. https://doi.org/10.1108/EJM-02-2020-0084

Moussawi, S., Koufaris, M., & Benbunan-Fich, R. (2021). How perceptions of intelligence and anthropomorphism affect adoption of personal intelligent agents. Electronic Markets, 31(2), 343–364. https://doi.org/10.1007/s12525-020-00411-w

Nguyen, Q. N., Ta, A., & Prybutok, V. (2019). An integrated model of voice-user interface continuance intention: The gender effect. International Journal of Human-Computer Interaction, 35(15), 1362–1377. https://doi.org/10.1080/10447318.2018.1525023

Nguyen, T., Quach, S., & Thaichon, P. (2022). The effect of AI quality on customer experience and brand relationship. Journal of Consumer Behaviour, 21(3), 481–493. https://doi.org/10.1002/cb.1974

Nunnally, J. C. (1978). Psychometric methods. McGraw-Hill.

Petter, Straub, & Rai. (2007). Specifying formative constructs in information systems research. MIS Quarterly, 31(4), 623. https://doi.org/10.2307/25148814

Pizzi, G., Vannucci, V., Mazzoli, V., & Donvito, R. (2023). I, chatbot! the impact of anthropomorphism and gaze direction on willingness to disclose personal information and behavioral intentions. PSychology & Marketing, 40(7), 1372–1387. https://doi.org/10.1002/mar.21813

Reinartz, W. J., Haenlein, M., & Henseler, J. (2009). An empirical comparison of the efficacy of covariance-based and variance-based SEM. Social Science Research Network, 26(4), 332–334. https://doi.org/10.1016/j.ijresmar.2009.08.001

Rhim, J., Kwak, M., Gong, Y., & Gweon, G. (2022). Application of humanization to survey chatbots: Change in chatbot perception, interaction experience, and survey data quality. Computers in Human Behavior, 126, 107034. https://doi.org/10.1016/j.chb.2021.107034

Roccapriore, A. Y., & Pollock, T. G. (2023). I don’t need a degree, I’ve got abs: Influencer warmth and competence, communication mode, and stakeholder engagement on social media. Academy of Management Journal, 66(3), 979–1006. https://doi.org/10.5465/amj.2020.1546

Roy, R., & Naidoo, V. (2021). Enhancing chatbot effectiveness: The role of anthropomorphic conversational styles and time orientation. Journal of Business Research, 126, 23–34. https://doi.org/10.1016/j.jbusres.2020.12.051

Rutkowski, A., Saunders, C., & Wiener, M. (2013). Intended usage of a healthcare communication technology: Focusing on the role of it-related overload. International Conference on Information Systems, 17. https://www.researchgate.net/publication/348372898. Accessed 10 Oct 2022.

Rzepka, C., Berger, B., & Hess, T. (2022). Voice Assistant vs. Chatbot – Examining the fit between conversational agents’ interaction modalities and information search tasks. Information Systems Frontiers, 24(3), 839–856. https://doi.org/10.1007/s10796-021-10226-5

Salancik, G. R., & Pfeffer, J. (1978). A social information processing approach to job attitudes and task design. Administrative Science Quarterly, 23(2), 224. https://doi.org/10.2307/2392563

Saunders, C., Wiener, M., Klett, S., & Sprenger, S. (2017). The impact of mental representations on ICT-related overload in the use of mobile phones. Journal of Management Information Systems, 34(3), 803–825. https://doi.org/10.1080/07421222.2017.1373010

Schanke, S., Burtch, G., & Ray, G. (2021). Estimating the impact of “humanizing” customer service chatbots. Information Systems Research, 32(3), 736–751. https://doi.org/10.1287/isre.2021.1015

Schuetzler, R. M., Grimes, G. M., & Scott Giboney, J. (2020). The impact of chatbot conversational skill on engagement and perceived humanness. Journal of Management Information Systems, 37(3), 875–900. https://doi.org/10.1080/07421222.2020.1790204

Seeger, A.-M., Pfeiffer, J., & Heinzl, A. (2021). Texting with humanlike conversational agents: Designing for anthropomorphism. Journal of the Association for Information Systems, 22(4), 931–967. https://doi.org/10.17705/1jais.00685

Sharma, M., Joshi, S., Luthra, S., & Kumar, A. (2022). Impact of digital assistant attributes on millennials’ purchasing intentions: A multi-group analysis using PLS-SEM, Artificial Neural Network and fsQCA. Information Systems Frontiers. https://doi.org/10.1007/s10796-022-10339-5

Shen, X.-L., Li, Y.-J., Sun, Y., & Wang, N. (2018). Channel integration quality, perceived fluency and omnichannel service usage: The moderating roles of internal and external usage experience. Decision Support Systems, 109, 61–73. https://doi.org/10.1016/j.dss.2018.01.006

Sun, Y., Li, S., & Yu, L. (2022). The dark sides of AI personal assistant: Effects of service failure on user continuance intention. Electronic Markets, 32(1), 17–39. https://doi.org/10.1007/s12525-021-00483-2

Taylor, S., & Todd, P. (1995). Assessing IT usage: The role of prior experience. MIS Quarterly, 19(4), 561. https://doi.org/10.2307/249633

von Walter, B., Kremmel, D., & Jäger, B. (2022). The impact of lay beliefs about AI on adoption of algorithmic advice. Marketing Letters, 33(1), 143–155. https://doi.org/10.1007/s11002-021-09589-1

Wang, W., Chen, L., Xiong, M., & Wang, Y. (2021). Accelerating AI adoption with responsible AI signals and employee engagement mechanisms in health care. Information Systems Frontiers. https://doi.org/10.1007/s10796-021-10154-4

Weisband, S. P., Schneider, S. K., & Connolly, T. (1995). Computer-mediated communication and social information: Status salience and status differences. Academy of Management, 38(4), 1124–1151. https://doi.org/10.2307/256623

Wixom, B. H., & Todd, P. A. (2005). A theoretical integration of user satisfaction and technology acceptance. Information Systems Research, 16(1), 85–102.

Xu, J. (David), Benbasat, I., & Cenfetelli, R. T. (2013). Integrating service quality with system and information quality: An empirical test in the e-service context. MIS Quarterly, 37(3), 777–794. https://doi.org/10.25300/MISQ/2013/37.3.05

Zhu, Y., Zhang, J., Wu, J., & Liu, Y. (2022). AI is better when I’m sure: The influence of certainty of needs on consumers’ acceptance of AI chatbots. Journal of Business Research, 150, 642–652. https://doi.org/10.1016/j.jbusres.2022.06.044

Zogaj, A., Mähner, P. M., Yang, L., & Tscheulin, D. K. (2023). It’s a Match! The effects of chatbot anthropomorphization and chatbot gender on consumer behavior. Journal of Business Research, 155, 113412. https://doi.org/10.1016/j.jbusres.2022.113412

Acknowledgements

This work was supported by National Natural Science Foundation of China (No. 72002062, 72332007), Fundamental Research Funds for the Central Universities (No. JZ2023HGTB0277), Hunan Provincial Natural Science Foundation of China (No. 2022JJ40655).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing Interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Li, Y., Gan, Z. & Zheng, B. How do Artificial Intelligence Chatbots Affect Customer Purchase? Uncovering the Dual Pathways of Anthropomorphism on Service Evaluation. Inf Syst Front (2023). https://doi.org/10.1007/s10796-023-10438-x

Accepted:

Published:

DOI: https://doi.org/10.1007/s10796-023-10438-x